Why self-driving cars may not be in your future

http://energyskeptic.com/2016/nasa-expl ... ur-future/ Pavlus, John. July 18, 2016. What NASA Could Teach Tesla about Autopilot’s Limits. Scientific American.

Decades of research have warned about the human attention span in automated cockpits

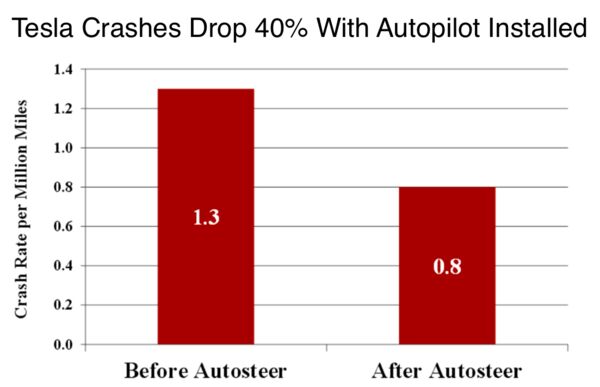

After the Tesla’s Model S in auto-pilot mode crashed into a truck and killed its driver, the safety of self-driving cars has been questioned due to 3 factors: the autopilot system didn’t see the truck coming, the driver didn’t notice the truck either, so neither applied the brakes.

Who better knows the dangers than NASA, where automation in cockpits has been studied for decades (i.e. cars, space shuttle, airplane). They describe how connected a person is to a decision-making process as “in the loop”, which, say driving a car yourself, means Observe, Orient, Decide, Act (OODA). But if your car is in autopilot but you can still interact with the system to brake or whatever, you are “ON the loop”.

Airplanes fly automated, with pilots observing. But this is very different from a car. If something goes wrong the pilot has many minutes to react. The plane is 8 miles in the air.

But in a car, you have just ONE SECOND. That requires a faster reflex reaction time than a test pilot. There’s almost no margin for error. This means you might as well be driving manually since you still have to be paying full attention when the car is on autopilot, not sitting in the back seat reading a book.

Tesla tries to get around this by having the autopilot make sure the driver’s hands are on the wheel and visual and audible alerts are triggered if not.

But NASA has found this doesn’t work because the better the auto-pilot is, the less attention the driver pays to what’s going on. It is tiring, and boring, to monitor a process that does well for a long time, and was called a “vigilance decrement” as far back as 1948. Experiments back then showed that after just 15 minutes vigilance drops off.

So the better the system the more we’re likely to stop paying attention. But no one would want to buy a self-driving car that they may as well be driving. The whole point is that dangerous stuff we’re already doing now like changing the radio, eating, and talking on the phone would be less dangerous in autopilot mode.

These findings expose a contradiction in systems like Tesla’s Autopilot. The better they work, the more they may encourage us to zone out—but in order to ensure their safe operation they require continuous attention. Even if Joshua Brown was not watching Harry Potter behind the wheel, his own psychology may still have conspired against him.

Tesla’s plan assumes that automation advances will eventually get around this problem.

By the way, the National Highway Traffic Safety Administration (NHTSA) already has a 4 level definition of automation.

Level 1 “invisible” driver assistance (i.e. antilock brakes with electronic stability control).

Level 2 cars with 2+ level 1 systems (i.e. in cruise control, lane centering)

Level 3 “Limited Self-Driving Automation” in cars like the Model S, where “the driver is expected to be available for occasional control but with sufficiently comfortable transition time.”

Level 4 full self-driving automation

NASA warns that although partial automation is inherently unsafe, it’s also a danger to assume that level 4, full self-driving automation is a logical extension of level 3 (other car makers like google and Ford appear to be trying to reach level 4).

Level 3 is probably unsuitable for cars because the 1-second reaction time is simply too fast, and level 4, based on NASA’s experience is also unlikely.

Computers do not deal well with anything unexpected, with sudden and unforeseen events. Self-driving cars can obey the rules of the road, but they cannot anticipate how other car drivers will behave.

Without super-accurate GPS automation relies on seeing lines on the pavement to keep in their lane, but snow, rain, and fog can make them go away. Self-driving cars rely on special detailed maps of the location of intersections, on-ramps, stop signs and so on. very few roads are mapped to this degree, or updated with construction, detours, conversions to roundabouts, new stop lights, and so on. They don’t detect potholes, puddles, or oil spots well and can be confounded by the shadows of overpasses. If a collision is unavoidable, do you run over the child or swerve into a light pole and kill the driver potentially? (Boudette 2016).

Excerpts from John Markoff. January 17, 2016. For Now, Self-Driving Cars Still Need Humans. New York Times.

Self-driving cars will require human supervision. On many occasions, the cars will tell their human drivers, “Here, you take the wheel,” when they encounter complex driving situations or emergencies. In the automotive industry, this is referred to as the hand-off problem, and automotive engineers say there is no easy solution to make a driver who may be distracted by texting, reading email or watching a movie perk up and retake control of the car in the fraction of a second that is required in an emergency. The danger is that by inducing human drivers to pay even less attention to driving, the safety technology may be creating new hazards. The ability to know if the driver is ready, and if you’re giving them enough notice to hand off, is a really tricky question.

The Tesla performed well in freeway driving, but on city streets and country roads, Autopilot performance could be described as hair-raising. The car, which uses only a camera to track the roadway by identifying lane markers, did not follow the curves smoothly or slow down when approaching turns. On a 220-mile drive to Lake Tahoe from Palo Alto, Calif., Dr. Thrun said he had to intervene more than a dozen times.

Like the Tesla, the new autonomous Nissan models will require human oversight and even their most advanced models aren’t autonomous in snow, heavy rain and some nighttime driving.

You could propose various fixes, but none of them get around the 1 second time for the driver to react. That is not fixable.

References

Boudette, N. June 4, 2016. 5 Things That Give Self-Driving Cars Headaches. New York Times.